The development of artificial neural networks, a subfield of AI, originally sought to mimic parts of the brain. Tiedemann noted: "We are a long way from that. Artificial neural networks actually have nothing in common with our brain. But that's why we talk about artificial intelligence in the first place. But we have managed to generate decidedly intelligent behaviour." Self-learning algorithms now outperform humans in terms of speed and accuracy when analyzing large amounts of data.

Artificial intelligence (AI) is undergoing rapid developments and more and more fields of applications are emerging given high-speed computer power. Powerful AI that surpasses human intelligence may become reality in the mid-21st century - a hundred years after British mathematician Alan Turing developed the Turing Test (1950) to gauge a machine's ability to show intelligent behaviour similar to or indistinguishable from that of a human. The test amounts to a first definition of artificial intelligence. However, weak AI is common nowadays and although it is not particularly intelligent, it is highly efficient. Weak AI offers solutions for specific applications and can be far better than humans. Tim Tiedemann, Professor of Intelligent Sensor Technology at the Department of Computer Science at the Hamburg University of Applied Sciences (HAW Hamburg), outlines its feats nowadays and possible upcoming achievements.

AI and the human brain

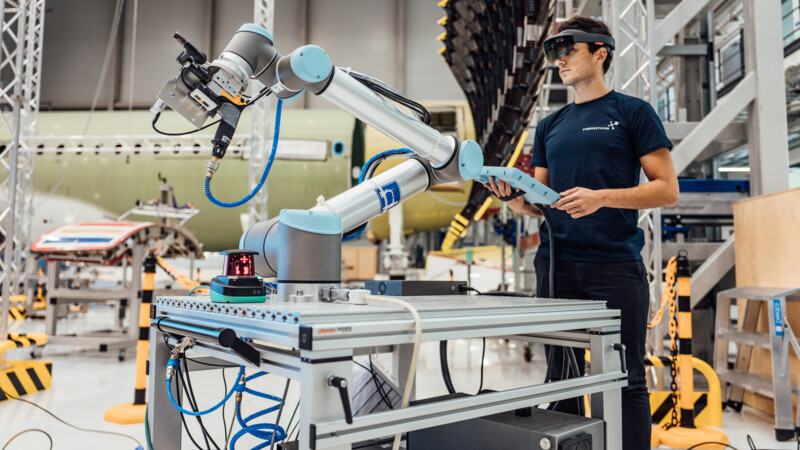

AI and autonomous driving

Autonomous driving is proving a promising field of application. Cameras and sensors register the environment and AI evaluates the data and frequently pixel by pixel. And because AI arrives at a result in this way, the system can be easily outsmarted by changing individual pixels, according to Tiedemann."Unlike humans, who learn the concept of traffic signs, for instance, AI is based on simple mathematical rules, and counts colourful pixels, as it were, and then arrives at the result e.g., stop sign or a right-of-way sign." Even small stickers on road signs can thus lead to misinterpretations.

AI in security

Tiedemann's research focuses on making AI systems as sturdy as possible. "As soon as a weak point is recognised, a suitable solution can be developed," the professor stressed. When it comes to traffic signs, more intense training to recognise the signs correctly could help prevent AI from being deceived. Two separate AI systems could be used in areas where security is vital. The first actually performs AI and the second then analyses the first system and checks it for anomalies such as a possible attack.

Research into future of networked mobility

"The real problem is 'we don't know what we don't know'." Far more tests of autonomous driving are needed to gain more knowledge of the capabilities of autonomous systems, their potential weak points and loopholes. To this end, Tiedemann and his fellow researchers are working on alternatives in the so-called autosys laboratory. Work starts on a small scale with miniature vehicles to simulate networked mobility of the future. The lab is collaborating with Miniatur Wunderland in Hamburg to test sensors and AI in a dynamic, preferably realistic environment. "If one of our cars hits a wall here, it doesn't matter. We are also testing boats and air taxis."

More or less intelligence

Tiedemann and his fellow researchers are also developing special sensor systems for the Testfield Intelligent Neighborhood Mobility (TIQ). Data is to be collected at Berliner Tor and in Lohmühlen Park near the HAW campus to determine the mobility needs of traffic-calmed districts. Assistance systems for cyclists and the blind as well as autonomous driving systems are to be tested making the scheme one of 42 anchor projects at the ITS World Congress 2021, which Hamburg is hosting in October. During the data collection phase, special emphasis will be on the participants’ privacy for which Tiedemann is using the simplest sensors available. "The sensors should be just good enough to distinguish road users, but not so good as to identify them." Intelligent sensors or cameras are normally used and 'rendered harmless' by downstream AI. "But what happens in the event of an error or hack?" asked Tiedemann. By using simple sensors, the data is absolutely safe because even the smartest AI cannot deduce individuals from simple, rough data. Simple is apparently sometimes smartest.

ys/kk/pb

Sources and further information

More

Similar articles

ARIC pushing artificial intelligence ahead